Interoperability Maturity Indicators

Read how to apply the FAIR Maturity Indicators to measure the INTEROPERABILITY of the data and metadata.

- Interoperability of data is compared with your FAIR objectives to identify and make improvements in an iterative manner

Overview

Implementation of the FAIR guidelines is measured by a framework of metrics which are now termed as Maturity Indicators (MI) [1, 2, 3]. This method is focussed on the maturity indicators to measure the findability of the data and metadata. It is a questionnaire for manual evaluation of the FAIR MIs for Interoperability which are recorded in the FAIRsharing registry [4,5]. It is important to understand that FAIR is intended to be aspirational. This means that any FAIR evaluation MIs are used to understand how to improve the FAIRness of the data.

The FAIR MIs are now reaching 2nd generation maturity as a result of community feedback and the need to automate FAIR evaluation, which is available as a public demonstrator developed by Mark Wilkinson and collaborators [6, 7]. The 2nd generation MIs have been adopted for this method to prepare for automated evaluation when they are ready for production usage by industry. All currently available FAIR evaluation tools and services have been compared by Research Data Alliance [8] who have recently released the FAIR Data Model: specification and guidelines [9].

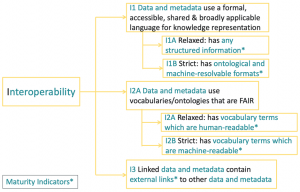

The MIs for Interoperability are illustrated below:

How To

This questionnaire enables manual evaluation of MIs to test for Interoperability.

- Is a knowledge representation language being used that has any kind of structured information?

- Indicates the use of a formal, accessible, shared, and broadly applicable language for knowledge representation that takes a relaxed definition.

- Examples: Any kind of structured information is sufficient.

- DOI: 10.25504/FAIRsharing.qUroF (Gen2-MI-I1A)

- Indicates the use of a formal, accessible, shared, and broadly applicable language for knowledge representation that takes a relaxed definition.

- Is a knowledge representation language being used that has ontological machine-resolvable formats?

- Indicates the use of a formal, accessible, shared, and broadly applicable language for knowledge representation that takes a strict interpretation.

- Examples: The language must be ontologically-grounded and machine-resolvable.

- DOI: 10.25504/FAIRsharing.l8fVBn (Gen2-MI-I1B)

- Indicates the use of a formal, accessible, shared, and broadly applicable language for knowledge representation that takes a strict interpretation.

- Does the data and metadata make relaxed use of ontologies and vocabularies that are themselves, FAIR?

- Indicates relaxed use of vocabularies that resolve to a human-readable page. FAIR ontologies and their vocabularies can be evaluated against the OBO principles [9, 10].

- Example: URL

- DOI: 10.25504/FAIRsharing.E3Nr1d (Gen2-MI-I2A)

- Indicates relaxed use of vocabularies that resolve to a human-readable page. FAIR ontologies and their vocabularies can be evaluated against the OBO principles [9, 10].

- Does the data and metadata make strict use of ontologies and vocabularies that are themselves, FAIR?

- Indicates strict use of vocabularies that resolve to machine-readable linked identifiers. FAIR ontologies and their vocabularies can be evaluated against the OBO principles [9, 10].

- Example: Vocabulary identifiers

- DOI: 10.25504/FAIRsharing.RCUuvt (Gen2-MI-I2B)

- Indicates strict use of vocabularies that resolve to machine-readable linked identifiers. FAIR ontologies and their vocabularies can be evaluated against the OBO principles [9, 10].

- Does the metadata for the data contain links that resolve to different data sources?

- Indicates whether the metadata for the data contain links to different sources i.e. that are not from the same source.

- Examples: Identifier to external cross references

- DOI: 10.25504/FAIRsharing.LLXjWx (Gen2-MI-I3)

- Indicates whether the metadata for the data contain links to different sources i.e. that are not from the same source.

References and Resources

- The FAIR metrics group repository on GitHub at fairmetrics.org

- Wilkinson et al 2018 A design framework and exemplar metrics for FAIRness. Scientific Data volume5, Article number: 180118 DOI: 10.1038/sdata.2018.118.

- Supplementary information for Wilkinson et al 2018: https://github.com/FAIRMetrics/Metrics/tree/master/Evaluation_Of_Metrics

- FAIR Maturity Indicators and Tools: https://github.com/FAIRMetrics/Metrics/tree/master/MaturityIndicators

- FAIRsharing registry search for FAIR metrics: https://fairsharing.org/standards/?q=FAIR+maturity+indicator

- Second generation Maturity Indicators tests: https://github.com/FAIRMetrics/Metrics/tree/master/MaturityIndicators/Gen2

- A public demonstration server for The FAIR Evaluator: https://w3id.org/AmIFAIR

- Research Data Alliance 2020 Results of an Analysis of Existing FAIR assessment tools: https://preview.tinyurl.com/yausl4s4

- Research Data Alliance 2020 FAIR Data Maturity Model: specification and guidelines: https://preview.tinyurl.com/y5tgby6w

- The OBO Foundry Principles Overview: http://www.obofoundry.org/principles/fp-000-summary.html

- Smith et al 2010 The OBO Foundry: coordinated evolution of ontologies to support biomedical data integration Nat Biotechnol. 2007 Nov; 25(11): 1251 https://doi.org/10.1038/nbt1346

Resources

- RDA FAIR Data Maturity Model

- Specification, guidelines and evaluation (manual sheet)

- COLID – Quick start

- Corporate Linked Data – short: COLID by Bayer AG

- A data catalogue for corporate environments that assigns URIs as persistent and global unique identifiers to any resource. The incorporated network proxy ensures that these URIs are resolvable and can be used to directly access those assets.

- COLID also provides a metadata repository for data assets based upon semantic models.

At a Glance

Related methods

- Findability FAIR Maturity Indicators

- Accessibility FAIR Maturity Indicators

- Reusability FAIR Maturity Indicators

Setting

- Evaluation of interoperability to improve the FAIRness of the data and metadata

Team

- Scientist generating or collecting the data and metadata

- Data steward for advice and guidance

Timing

- 0.5 day to answer the questions and faster if evaluation is automated

- Additional time will depend on implementation of the FAIR improvements

Difficulty

- High

Resources

Top Tips

- How FAIR are your data? Checklist by Sara Jones & Marjan Grootveld

- Knowledge representation languages, vocabularies and ontologies that are themselves “grounded” in the FAIR principles are recommended for data interoperability.

- FAIR ontologies and vocabularies can be evulated by community standards such as the Open Biomedical Ontology (OBO) principles [9, 10].

- Relaxed maturity indicators are sufficient for manual evaluation whereas more strict indicators are necessary for automated evaluation.